PyTorch CNN实战之MNIST手写数字识别示例

简介

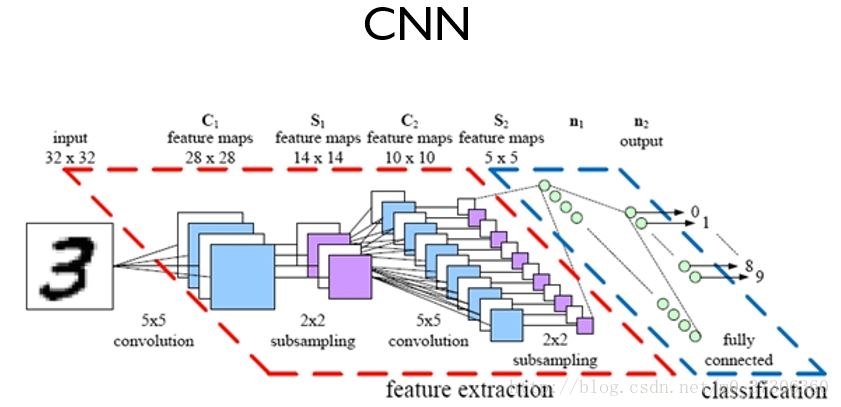

卷积神经网络(Convolutional Neural Network, CNN)是深度学习技术中极具代表的网络结构之一,在图像处理领域取得了很大的成功,在国际标准的ImageNet数据集上,许多成功的模型都是基于CNN的。

卷积神经网络CNN的结构一般包含这几个层:

- 输入层:用于数据的输入

- 卷积层:使用卷积核进行特征提取和特征映射

- 激励层:由于卷积也是一种线性运算,因此需要增加非线性映射

- 池化层:进行下采样,对特征图稀疏处理,减少数据运算量。

- 全连接层:通常在CNN的尾部进行重新拟合,减少特征信息的损失

- 输出层:用于输出结果

PyTorch实战

本文选用上篇的数据集MNIST手写数字识别实践CNN。

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import datasets, transforms

from torch.autograd import Variable

# Training settings

batch_size = 64

# MNIST Dataset

train_dataset = datasets.MNIST(root='./data/',

train=True,

transform=transforms.ToTensor(),

download=True)

test_dataset = datasets.MNIST(root='./data/',

train=False,

transform=transforms.ToTensor())

# Data Loader (Input Pipeline)

train_loader = torch.utils.data.DataLoader(dataset=train_dataset,

batch_size=batch_size,

shuffle=True)

test_loader = torch.utils.data.DataLoader(dataset=test_dataset,

batch_size=batch_size,

shuffle=False)

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

# 输入1通道,输出10通道,kernel 5*5

self.conv1 = nn.Conv2d(1, 10, kernel_size=5)

self.conv2 = nn.Conv2d(10, 20, kernel_size=5)

self.mp = nn.MaxPool2d(2)

# fully connect

self.fc = nn.Linear(320, 10)

def forward(self, x):

# in_size = 64

in_size = x.size(0) # one batch

# x: 64*10*12*12

x = F.relu(self.mp(self.conv1(x)))

# x: 64*20*4*4

x = F.relu(self.mp(self.conv2(x)))

# x: 64*320

x = x.view(in_size, -1) # flatten the tensor

# x: 64*10

x = self.fc(x)

return F.log_softmax(x)

model = Net()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

def train(epoch):

for batch_idx, (data, target) in enumerate(train_loader):

data, target = Variable(data), Variable(target)

optimizer.zero_grad()

output = model(data)

loss = F.nll_loss(output, target)

loss.backward()

optimizer.step()

if batch_idx % 200 == 0:

print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.format(

epoch, batch_idx * len(data), len(train_loader.dataset),

100. * batch_idx / len(train_loader), loss.data[0]))

def test():

test_loss = 0

correct = 0

for data, target in test_loader:

data, target = Variable(data, volatile=True), Variable(target)

output = model(data)

# sum up batch loss

test_loss += F.nll_loss(output, target, size_average=False).data[0]

# get the index of the max log-probability

pred = output.data.max(1, keepdim=True)[1]

correct += pred.eq(target.data.view_as(pred)).cpu().sum()

test_loss /= len(test_loader.dataset)

print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(

test_loss, correct, len(test_loader.dataset),

100. * correct / len(test_loader.dataset)))

for epoch in range(1, 10):

train(epoch)

test()

输出结果:

Train Epoch: 1 [0/60000 (0%)] Loss: 2.315724

Train Epoch: 1 [12800/60000 (21%)] Loss: 1.931551

Train Epoch: 1 [25600/60000 (43%)] Loss: 0.733935

Train Epoch: 1 [38400/60000 (64%)] Loss: 0.165043

Train Epoch: 1 [51200/60000 (85%)] Loss: 0.235188Test set: Average loss: 0.1935, Accuracy: 9421/10000 (94%)

Train Epoch: 2 [0/60000 (0%)] Loss: 0.333513

Train Epoch: 2 [12800/60000 (21%)] Loss: 0.163156

Train Epoch: 2 [25600/60000 (43%)] Loss: 0.213840

Train Epoch: 2 [38400/60000 (64%)] Loss: 0.141114

Train Epoch: 2 [51200/60000 (85%)] Loss: 0.128191Test set: Average loss: 0.1180, Accuracy: 9645/10000 (96%)

Train Epoch: 3 [0/60000 (0%)] Loss: 0.206469

Train Epoch: 3 [12800/60000 (21%)] Loss: 0.234443

Train Epoch: 3 [25600/60000 (43%)] Loss: 0.061048

Train Epoch: 3 [38400/60000 (64%)] Loss: 0.192217

Train Epoch: 3 [51200/60000 (85%)] Loss: 0.089190Test set: Average loss: 0.0938, Accuracy: 9723/10000 (97%)

Train Epoch: 4 [0/60000 (0%)] Loss: 0.086325

Train Epoch: 4 [12800/60000 (21%)] Loss: 0.117741

Train Epoch: 4 [25600/60000 (43%)] Loss: 0.188178

Train Epoch: 4 [38400/60000 (64%)] Loss: 0.049807

Train Epoch: 4 [51200/60000 (85%)] Loss: 0.174097Test set: Average loss: 0.0743, Accuracy: 9767/10000 (98%)

Train Epoch: 5 [0/60000 (0%)] Loss: 0.063171

Train Epoch: 5 [12800/60000 (21%)] Loss: 0.061265

Train Epoch: 5 [25600/60000 (43%)] Loss: 0.103549

Train Epoch: 5 [38400/60000 (64%)] Loss: 0.019137

Train Epoch: 5 [51200/60000 (85%)] Loss: 0.067103Test set: Average loss: 0.0720, Accuracy: 9781/10000 (98%)

Train Epoch: 6 [0/60000 (0%)] Loss: 0.069251

Train Epoch: 6 [12800/60000 (21%)] Loss: 0.075502

Train Epoch: 6 [25600/60000 (43%)] Loss: 0.052337

Train Epoch: 6 [38400/60000 (64%)] Loss: 0.015375

Train Epoch: 6 [51200/60000 (85%)] Loss: 0.028996Test set: Average loss: 0.0694, Accuracy: 9783/10000 (98%)

Train Epoch: 7 [0/60000 (0%)] Loss: 0.171613

Train Epoch: 7 [12800/60000 (21%)] Loss: 0.078520

Train Epoch: 7 [25600/60000 (43%)] Loss: 0.149186

Train Epoch: 7 [38400/60000 (64%)] Loss: 0.026692

Train Epoch: 7 [51200/60000 (85%)] Loss: 0.108824Test set: Average loss: 0.0672, Accuracy: 9793/10000 (98%)

Train Epoch: 8 [0/60000 (0%)] Loss: 0.029188

Train Epoch: 8 [12800/60000 (21%)] Loss: 0.031202

Train Epoch: 8 [25600/60000 (43%)] Loss: 0.194858

Train Epoch: 8 [38400/60000 (64%)] Loss: 0.051497

Train Epoch: 8 [51200/60000 (85%)] Loss: 0.024832Test set: Average loss: 0.0535, Accuracy: 9837/10000 (98%)

Train Epoch: 9 [0/60000 (0%)] Loss: 0.026706

Train Epoch: 9 [12800/60000 (21%)] Loss: 0.057807

Train Epoch: 9 [25600/60000 (43%)] Loss: 0.065225

Train Epoch: 9 [38400/60000 (64%)] Loss: 0.037004

Train Epoch: 9 [51200/60000 (85%)] Loss: 0.057822Test set: Average loss: 0.0538, Accuracy: 9829/10000 (98%)

Process finished with exit code 0

参考:https://github.com/hunkim/PyTorchZeroToAll

以上就是本文的全部内容,希望对大家的学习有所帮助,也希望大家多多支持脚本之家。

关注微信公众号获取更多VSCode编程信息,定时发布干货文章

全部评论